In this post I will show how Azure Durable functions can complement your sitecore solution and help enhance performance.

Problem

We took over a Sitecore solution and its content management server was running very slowly and intermittently the sitecore client would be unresponsive and crash

The problem was caused a number a lot of CPU/Data/Bandwidth intensive schedule tasks that were running to retrieve a wide range of data from a number of web services, then aggregate the data and perform complicated calculations, of which a small sub set of the result were presented on the website.

Solution

As the solution was already hosted in Azure, a perfect solution was to off load the heavy lifting from the Content Management server to Azure Functions, to do the data retrieval, calculations and provide the results for the website. Firstly, a very brief overview of the pro’s and con’s of Azure functions.

Pro’s

- Serverless execution model

- Dynamic Scaling

- Micro pricing

- Security

- Wide range of triggers

- Https, Timer (CRON), Azure storage changes, Azure Queue, Message from Service bus, etc.

Con’s

- Stateless

- Execution time limit (default 5 mins, max 10)

- Concurrency

The main challenge with Azure functions is that most of the schedule tasks could take more than 10 minutes to complete and require state management. But not to worry as Azure Durable Functions came to the rescue.

Azure Durable Functions

Durable Functions are an extension of Azure Functions and Azure WebJobs that lets you write stateful functions in a serverless environment. The extension manages state, checkpoints, and restarts for you, so it is possible to implement code that run for a long time.

In addition if an Azure function fails, for example the web request times out, you can define if the durable function should wait and retry X times, before failing. Behind the scenes, the Durable Functions extension is built on top of the Durable Task Framework, an open-source library on GitHub for building durable task orchestrations.

Advantages of Durable Functions

- They define workflows in code. No JSON schemas or designers are needed.

- They can call other functions either synchronously or asynchronously.

- Output from called functions can be saved to local variables.

- They automatically checkpoint their progress whenever the function awaits.

- Local state is never lost, even if the process recycles or the VM reboots.

- Easy to Unit Test

- Can run for a very long time, in theory forever

- Cost effective, as you do not pay for execution time whilst waiting for tasks to complete.

Here is a brief introduction to the most common Durable Functions patterns

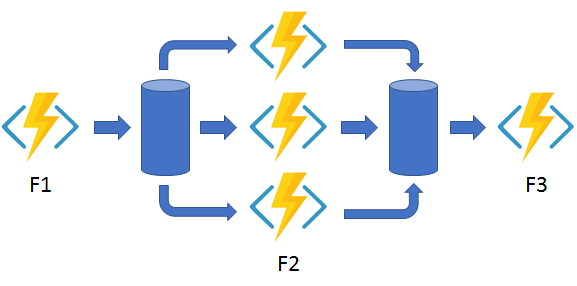

Pattern 1 – Function chaining

Function chaining refers to the pattern of executing a sequence of functions in a particular order. Often the output of one function needs to be applied to the input of another function.

The code below is an example of how you would achieve this

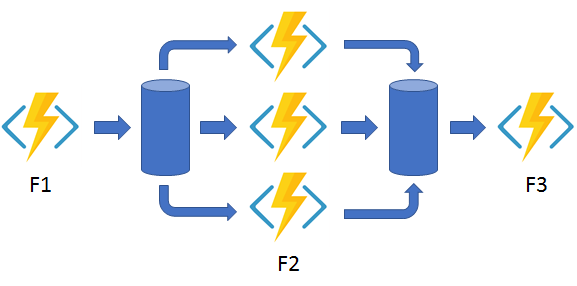

Pattern 2 – Fan-out/fan-in

Fan-out/fan-in refers to the pattern of executing multiple functions in parallel, and then waiting for all to finish. Often some aggregation work is done on results returned from the functions. This is perfect when you want to do a lot of things in parallel, to reduce the time taken to complete the task and then aggregate/process all the results.

Below is an example of how the code could look

Pattern 3 – Monitoring

The monitor pattern refers to a flexible recurring process in a workflow – for example, polling until certain conditions are met. A regular timer-trigger can address a simple scenario, such as a periodic clean-up job, but its interval is static and managing instance lifetimes becomes complex. Durable Functions enables flexible recurrence intervals, task lifetime management, and the ability to create multiple monitor processes from a single orchestration.

An example could be instead of exposing an endpoint for an external client to monitor a long-running operation, the long-running monitor consumes an external endpoint, waiting for some state change. See the example below.

Is Replaying

One thing that catches people out is that the code is re-run from the start of the function after each await completes, therefore for example with Logging and other code you need to check for IsReplaying so you only log once.

Durable Functions – Orchestrator code constraints

There are a number code constraints, that must be adhered to when using Durable function orchestration.

- Code must be deterministic.

- It will be replayed multiple times and must produce the same result each time.

- For example, no direct calls to get the current date/time, get random numbers, generate random GUIDs, or call into remote endpoints.

- For current time, creating times, random number etc. use the special functions provided by the IDurableOrchestrationContext.

- Non-deterministic operations must be done in activity functions

- This includes any interaction with other input or output bindings. This ensures that any non-deterministic values will be generated once on the first execution and saved into the execution history. Subsequent executions will then use the saved value automatically.

- Orchestrator code should be non-blocking.

- For example, that means no I/O and no calls to Thread.Sleep or equivalent APIs

- Orchestrator code must never initiate any async operation, except by using the IIDurableOrchestrationContext API.

- For example, no Task.Run, Task.Delay or HttpClient.SendAsync.

- The Durable Task Framework executes orchestrator code on a single thread and cannot interact with any other threads that could be scheduled by other async APIs.

- Infinite loops should be avoided

- Because the Durable Task Framework saves execution history as the orchestration function progresses, an infinite loop could cause an orchestrator instance to run out of memory.

- For infinite loop scenarios, use APIs such as ContinueAsNew to restart the function execution and discard previous execution history.

Result

By migrating all the long running CPU/data/bandwidth intensive tasks to Azure Durable Functions, the performance of the Sitecore solution went from painful to fantastic.

Unfortunately it is very common that Sitecore solutions assume responsibility for task that are not the websites responsibility, but pairing with Azure functions can help mitigate this issue.

An additional benefit was that the website was isolated/protected from 3rd party system changes, as when an external system changes only the Azure functions had to be modified and deployed – therefore no down time for the sitecore solution.

Anyway I hope sitecore develops will consider Azure functions to enhance their sitecore solutions.