We recently took over an exiting Sitecore solution, where the customer had a policy that the test coverage must be over 90%, which is fantastic.

Unfortunately, I found a very big class that had ExcludeFromCodeCoverage attribute applied to it, without a justification!

You must always specify the Justification to explain why code is excluded from test coverage. There are many valid reasons why you should exclude certain classes from test coverage, i.e., DTO’s, program entry code, DI setup, sealed classes, 3rd party services, etc.

The Problem

The following class has a lot of business logic and is excluded from test coverage as it has the ExcludeFromCodeCoverage attribute. Lets not get into the fact that no class should have multiple responsisbities and defiantly not have 750+ lines of code.

But it should defiantly have test coverage for the logic it provides.

[ExcludeFromCodeCoverage]

public class SynchronizeOutlookAppointmentsService

{

private const int MAX_FREEBUSY_ATTENDEES = 100;

private const int MAX_FREEBUSY_PERIOD = 60;

private readonly ExchangeService _exchangeService;

private readonly ILogger<SynchronizeOutlookAppointmentsService> _logger;

public SynchronizeOutlookAppointmentsService(

[NotNull]ExchangeService exchangeService,

[NotNull]ILogger<SynchronizeOutlookAppointmentsService> logger)

{

Assert.ArgumentNotNull(logger, nameof(logger));

Assert.ArgumentNotNull(exchangeService, nameof(exchangeService));

_exchangeService = exchangeService;

_logger = logger;

}

public void SynchronizeOutlookAppointments()

{

DateTime now = DateTime.Now;

var availabilities = GetAvailability(now, GetToDate(now), GetEmailAddress());

CheckWorkSchedules(now, availabilities);

CheckRules(now, availabilities);

CheckPlannedMeetings(now, availabilities);

var delete = ShouldBeDeleted(availabilities);

var update = ShouldBeUpdated(availabilities);

var addded = ShouldBeAdded(availabilities);

HandleDeletedAppointments(now, delete);

HandleUpdatedAppointments(now, update);

HandleAddedAppointments(now, addded);

}

//700+ more lines, with lots of domain logic and rules

I assume it had the ExcludeFromCodeCoverage attribute applied because it depends on the ExchangeService class from Exchange Web Services (EWS). Unfortunately most of the classes in the EWS are sealed and their constructor is internal.

So, it is not possible to mock the class and therefore can’t be unit tested, unless you have a test instance of exchange server you can call and setup the test date relevant for the tests.

Sitecore (and every other piece of software) have classes which are sealed too, which makes testing difficult. When faced with an API that returns sealed class’s, how do we minimize what cannot be tested?

Solution

The solution is to isolate/hide the dependency on sealed class exposed by EWS. There are 5 steps:

- Duplicate the sealed classes.

- Convert the sealed classes, to duplicated classes.

- Introduce an interface to abstract/hide the use of the classes.

- Implement the interface to call ExchangeService.

- Inject the interface into the class with the business logic.

Step 1 – Duplicate the sealed classes

We need to check availability and the details of any events in their calendar, so the following class from EWS were duplicated, luckely not all the classes are sealed, with internal constructors.

public class Availability

{

public ServiceError ErrorCode { get; set; }

public string ErrorMessage { get; set; } = string.Empty;

public IEnumerable<CalendarEvent> Events { get; set; }

}

public class CalendarEvent

{

public DateTime Start { get; set; }

public DateTime End { get; set; }

public LegacyFreeBusyStatus FreeBusyStatus { get; set; }

}

Step 2 – Convert the sealed classes, to duplicated classes

Introduce a factory class, that takes the sealed classes and converts them to the duplicated classes,.

public class AvailabilityFactory

{

public IEnumerable<Availability> Create(GetUserAvailabilityResults getUserAvailabilityResults)

{

return getUserAvailabilityResults?

.AttendeesAvailability?

.Select(attendeeAvailability => new Availability()

{

ErrorCode = attendeeAvailability.ErrorCode,

ErrorMessage = attendeeAvailability.ErrorMessage,

Events = Create(attendeeAvailability.CalendarEvents)

}).ToList();

}

private IEnumerable<CalendarEvent> Create([NotNull]IReadOnlyCollection<Microsoft.Exchange.WebServices.Data.CalendarEvent> calendarEvents)

{

return calendarEvents

.Select(calendarEvent =>

new CalendarEvent()

{

Start = calendarEvent.StartTime,

End = calendarEvent.EndTime,

FreeBusyStatus = calendarEvent.FreeBusyStatus,

}).ToList();

}

}

Step 3 – Introduce an Interface

Introduce a IAvailabilityRepository interface to abstract/hide the use of the ExchangeService class and the related sealed classes it returns.

public interface IAvailabilityRepository

{

IEnumerable<Availability> Get(

IEnumerable<AttendeeInfo> attendees,

TimeWindow timeWindow,

AvailabilityData requestedData);

}

Step 4 – Implement the IAvailabilityRepository to call ExchangeService

Now the class has 2 lines that can’t be tested.

[ExcludeFromCodeCoverage(Justification = "Can't test as it requires access to outlook, and ExchangeService class is sealed")]

public class AvailabilityRepository : IAvailabilityRepository

{

private readonly ExchangeService _exchangeService;

private readonly AvailabilityFactory _availabilityFactory;

public AvailabilityRepository(

[NotNull] ExchangeService exchangeService,

[NotNull] AvailabilityFactory availabilityFactory)

{

Assert.ArgumentNotNull(exchangeService, nameof(exchangeService));

Assert.ArgumentNotNull(availabilityFactory, nameof(availabilityFactory));

_exchangeService = exchangeService;

_availabilityFactory = availabilityFactory;

}

public IEnumerable<Availability> Get(

IEnumerable<AttendeeInfo> attendees,

TimeWindow timeWindow,

AvailabilityData requestedData)

{

var getUserAvailabilityResults = _exchangeService.GetUserAvailability(attendees, timeWindow, requestedData);

return _availabilityFactory.Create(getUserAvailabilityResults);

}

}

In addition to the above implementation you can also make a mock class for local debugging, testing, that logs to file, and or save reads/from a database.

Step 5 – Inject the interface into the class with the business logic.

Now the last set is to inject in the IAvailabilityRepository instead of ExchangeService and then use the duplicated classes.

It is now it is possible to test the logic in this class.

public class SynchronizeOutlookAppointmentsService

{

private const int MAX_FREEBUSY_ATTENDEES = 100;

private const int MAX_FREEBUSY_PERIOD = 60;

private readonly IAvailabilityRepository _availabilityRepository;

private readonly ILogger<SynchronizeOutlookAppointmentsService> _logger;

public SynchronizeOutlookAppointmentsService(

[NotNull] IAvailabilityRepository availabilityRepository,

[NotNull]ILogger<SynchronizeOutlookAppointmentsService> logger)

{

Assert.ArgumentNotNull(logger, nameof(logger));

Assert.ArgumentNotNull(availabilityRepository, nameof(availabilityRepository));

_availabilityRepository = availabilityRepository;

_logger = logger;

}

public void SynchronizeOutlookAppointments()

{

DateTime now = DateTime.Now;

var availabilities = GetAvailability(now, GetToDate(now), GetEmailAddress());

CheckWorkSchedules(now, availabilities);

CheckRules(now, availabilities);

CheckPlannedMeetings(now, availabilities);

var delete = ShouldBeDeleted(availabilities);

var update = ShouldBeUpdated(availabilities);

var addded = ShouldBeAdded(availabilities);

HandleDeletedAppointments(now, delete);

HandleUpdatedAppointments(now, update);

HandleAddedAppointments(now, addded);

}

// TODO... split into a number of smaller classes where each class has a single responsibility 🙂

Of course, this class should be refactored and split into a number of smaller classes where each class has a single responsibility, but that is a blog post for another day.

I hope this post will help you ensure more of your code can be tested.

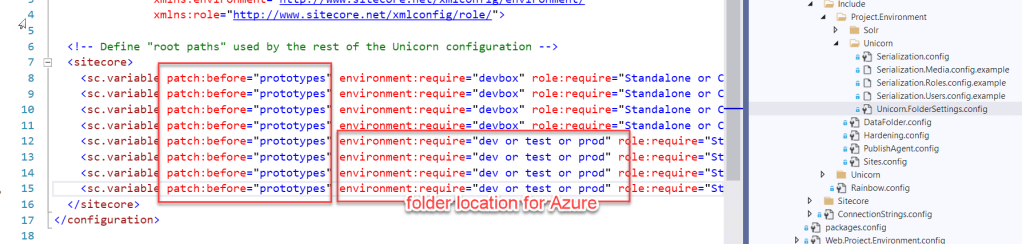

In addition this pattern is effective for abstracting away dependencies on 3rd party systems, for example SolR, and or many other Sitecore API’s.